This last week, I presented a few sessions at ODTUG’s KSCOPE 2019 conference in Seattle. One of these sessions was with my wonderful co-presenter and Oracle Application Cloud expert, Opal Alapat. I connected with her when the call for papers opened and asked her if there would be interest in doing a session with Power BI connect to the Essbase in the Oracle Applications cloud, (OAC). There was no desire to do a bake-off or competitive session, just a morbid curiosity about what options there were to connect the two and the outcome of building reports out of valuable data with such a powerful BI tool.

Opal agreed it was a good idea and as the conference chair, took it on to present the opportunity for us to present this to the conference committee. It is understandable that it could be received poorly, considering the idea vendor history and a fear of blatant marketing sessions. Lucky for us, we both have a long history of presenting strong technical sessions, a great love of Oracle technology, so this wonderful and brave conference gave us a chance to show our stuff.

The Technical World is Shrinking

I’ve taken on this type of new frontier before, realizing that few IT shops are truly single platform, so I thank them publicly for the opportunity and the attendees demonstrated their appreciation with a ton of questions during and even more so after the session.

We started the session making it clear that we would demonstrate both the Oracle Application Cloud, with sample data from Essbase connected to Oracle Analytics Cloud as well as Power BI. We also stated we had no interest in a competitive bake- off but hoped to simply show the opportunities to provide more value with the data.

We both went into clear explanations around the architecture, requirements and licensing that would be required as part of our solution, and since I was working in a cross-platform cloud solution, the hurdles I faced, options I attempted to work around them, what was successful, those that failed, etc.

The Use-Case

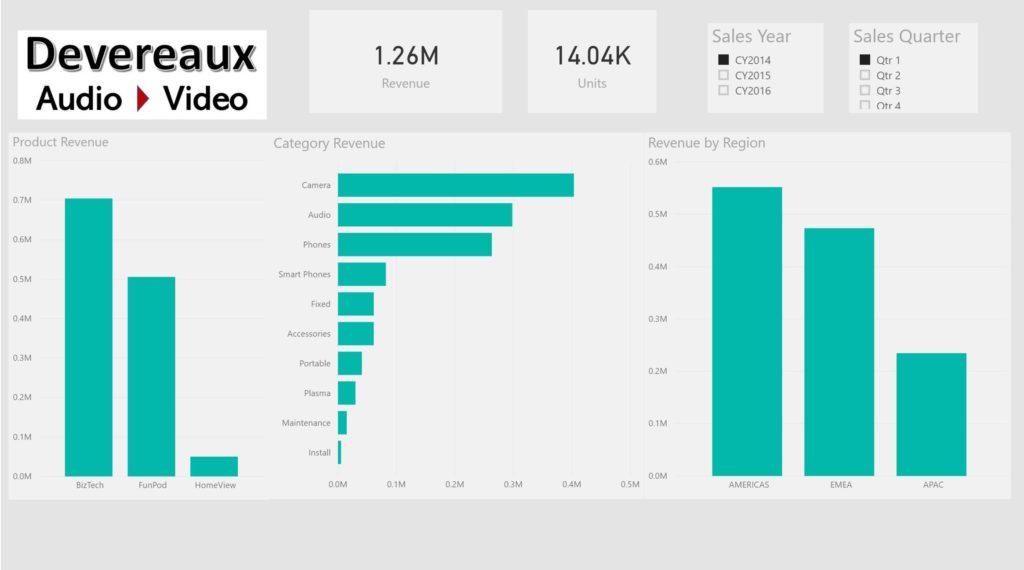

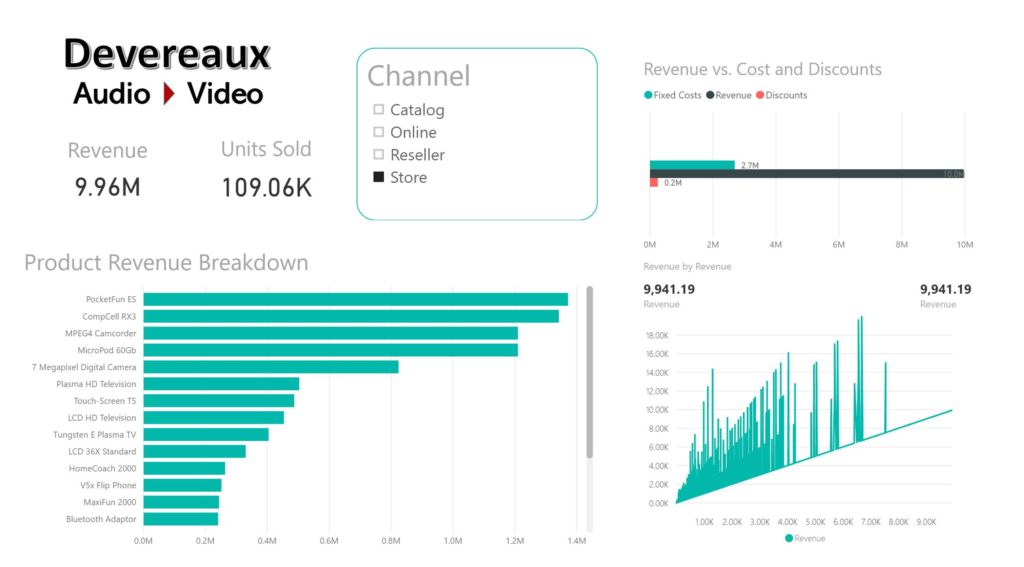

The OAC environment that Opal gave me access possessed an example schema/data based on an Audio-Video store revenue for multiple years. I’d never worked with the OAC before, but I was quickly able to find five methods to connect Power BI to it, either to import or to direct connect to the data:

- Data Direct makes a Rest API to connect to the Oracle Cloud.

- Use the web interface with Power BI and “scrape the website”, allowing PBI to build the table from example.

- Connect with an ODBC driver

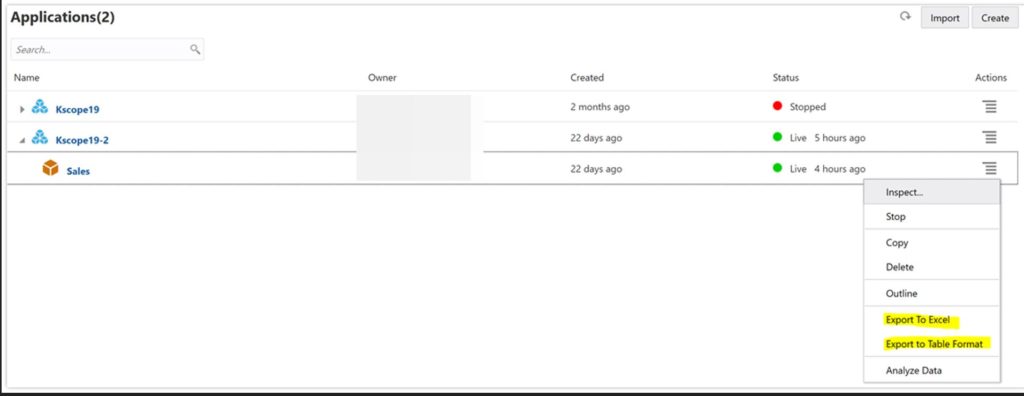

- Via the OAC interface, export to Excel and then import into Power BI

- Via the OAC interface, export to a table and then import into Power BI as a CSV file.

Rest API

Although #1 may sound like a great option, the Rest API ran via a docker container or dedicated server to run the service. I have the container running on my laptop, but along with a more complicated setup, the connection dropped intermittently and the container crashed from time to time.

Web “Scraping”

Connecting to the website to scrape the data isn’t difficult, as Power BI has the ability to bypass the HTML and simply build out the table by example. You enter in a few examples of what data you want it to build a table out of and it’s quite intuitive and will take it from there. I had made a goal to have a repeatable, automated refresh option and with the authentication to OAC, this make web scraping less attractive.

ODBC Driver

This was the option that I thought, and in my research, appeared to be the most viable and failed terribly. The authentication method to OAC and steps that Oracle has taken to use preferred tools has made ODBC the least simple method of connecting to the data and I chose to give up vs. working towards a functioning test.

Export to Excel

The export to Excel offers a cube format for those striving for:

- the cube “feel” when modeling

- want to use power pivot or more familiar with Excel than Power BI

As I’m definitely a RDBMS DBA/dev over an Excel person, this wasn’t my preference.

Export to table

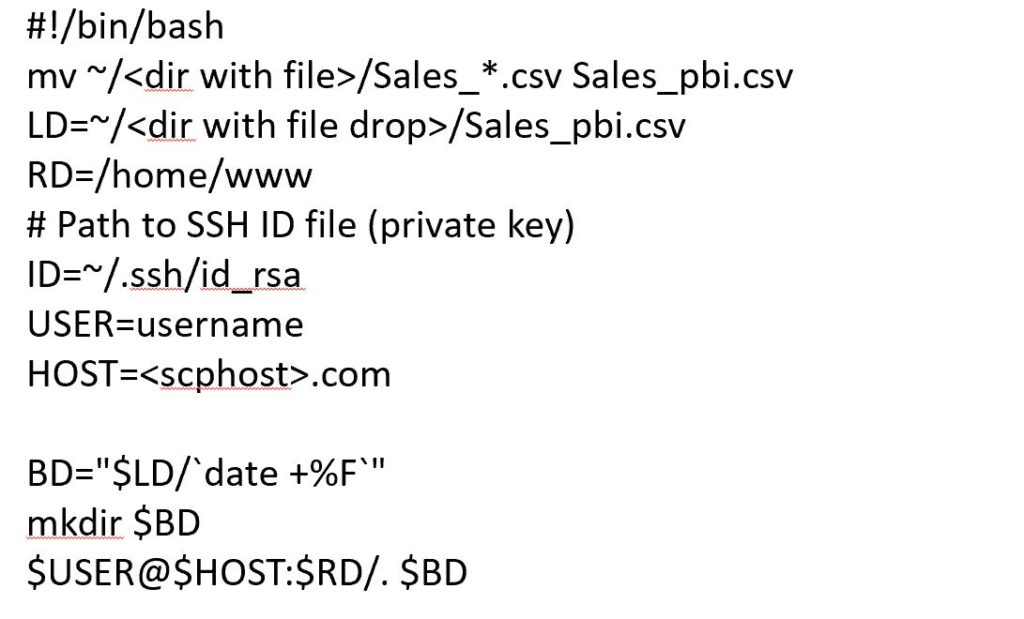

This turned out to be my favorite. The export was a simple table, in a CSV format that was easy to import into Power BI and easy to build visuals and do predictive analysis on. The table was straight forward and required no formatting or massaging once brought into Power BI. To build out a model that could be updated on a regular basis, I was able to schedule a job to export the table on a regular interval to a shared folder and then use a simple shell script to refresh it, renaming the file first to a generic name vs. the unique name that is be default. I then updated the data source settings to point to the shared folder, which means no manual processing once the reports were built.

Huge Win-Win

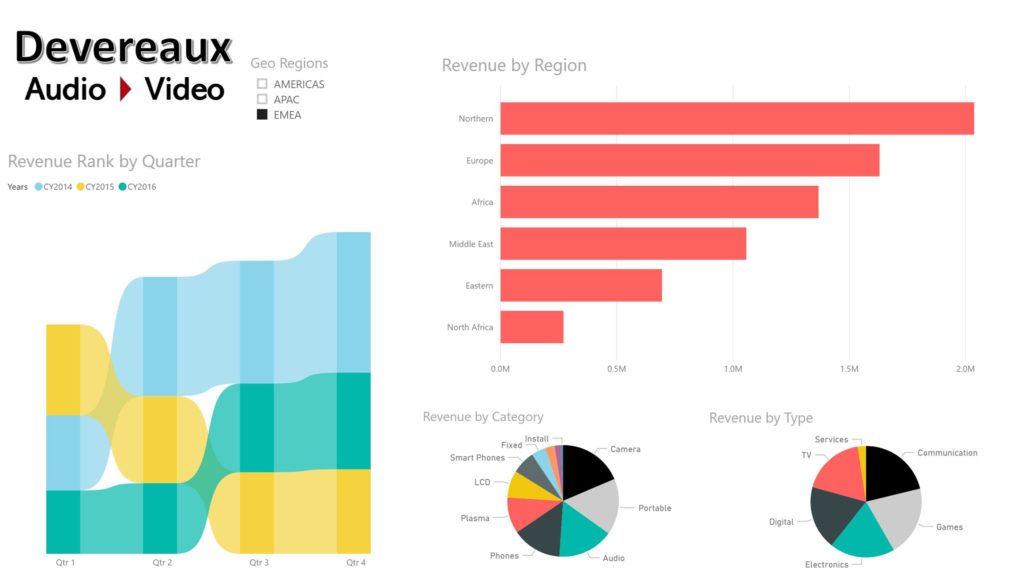

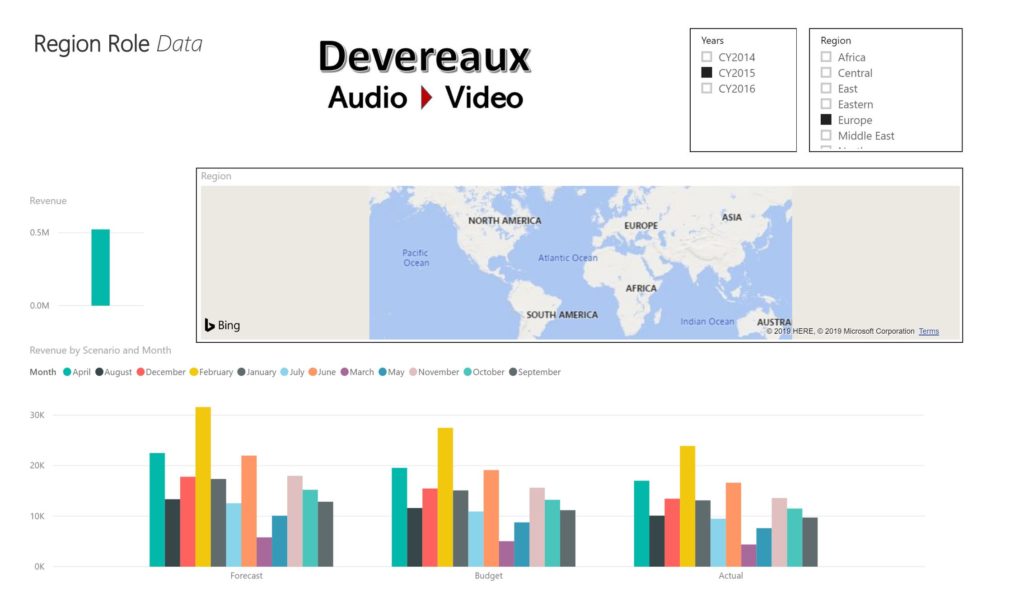

To complete my demonstration, I went through my Power BI reports, both the design and the row level security by region and user, as well as how easy it was, due to the data structure, for me to build out visualizations with predictive analysis. This was really crucial since I don’t have a background or any experience with the data that was provided to me. I was simply the report designer and let Power BI do the heavy lifting.

Take-Aways from the Session

- Attendees are often using Power BI with these Oracle data solutions, but have very little reference material on how to be successful with it.

- The ability to direct connect via the rest API was a bit shaky and complicated to set up, where the ability to automate the export to flat file option, (either cube or table) was the most stable.

- ”Web Scraping” using and using the build by example and then creating templates to automate the data massage is possible with Essbase, but does require manual refreshes due to credential authentication.

- The reports in Power BI were robust, multi-tab reports and dashboards was available with even simple data. The predictive analysis and quantity of visualizations allowed the user to build out expanded reports, both interactive, as well as paginated.

- For those that have on-premises Essbase, the Essbase connector is available for download, (brand new!) and can be used for those environments with Power BI.

Sample Reports

One of the great things about the use case from Opal and my session, is that the data is all sample data and can be made available to everyone, demonstrating how easy it is to get value by anyone, even someone like me who’s never worked with it before!

If you’re interested in seeing a high level example of the reports created, I’ve added a screenshots of the report here:

Now each of the attendees for KSCOPE have access to the PBIX files with sample data to work with, including the cube example. All the reports, unlike the screenshots, are interactive, allowing the user to dig into the data and assign role level security to those using the report depending on region and login name, (this role would have to be updated if using something than Azure Active Directory to log in, of course.)

Have a happy 4th of July everyone!!

One comment on “Power BI Reporting with Oracle Essbase”

Comments are closed.